Amazon Q Security

Amazon Q, AWS's AI assistant, refused every security question with a templated apology. The security team had good reason: hallucinated findings are dangerous. But CSAT scores and customer interviews showed the refusal was failing customers at their most urgent moments. I designed a chat extension that routed security questions to real GuardDuty data, giving Q a trusted source instead of trying to make it smarter. The design shipped before the API could support it. The pattern I proposed now exists across multiple AWS surfaces.

- Pattern adopted across AWS

- Ended blanket refusals in Q

- Patterns added to Q library

- Security CX Team

- Amazon Q Platform Team

Senior Product Designer

The Problem

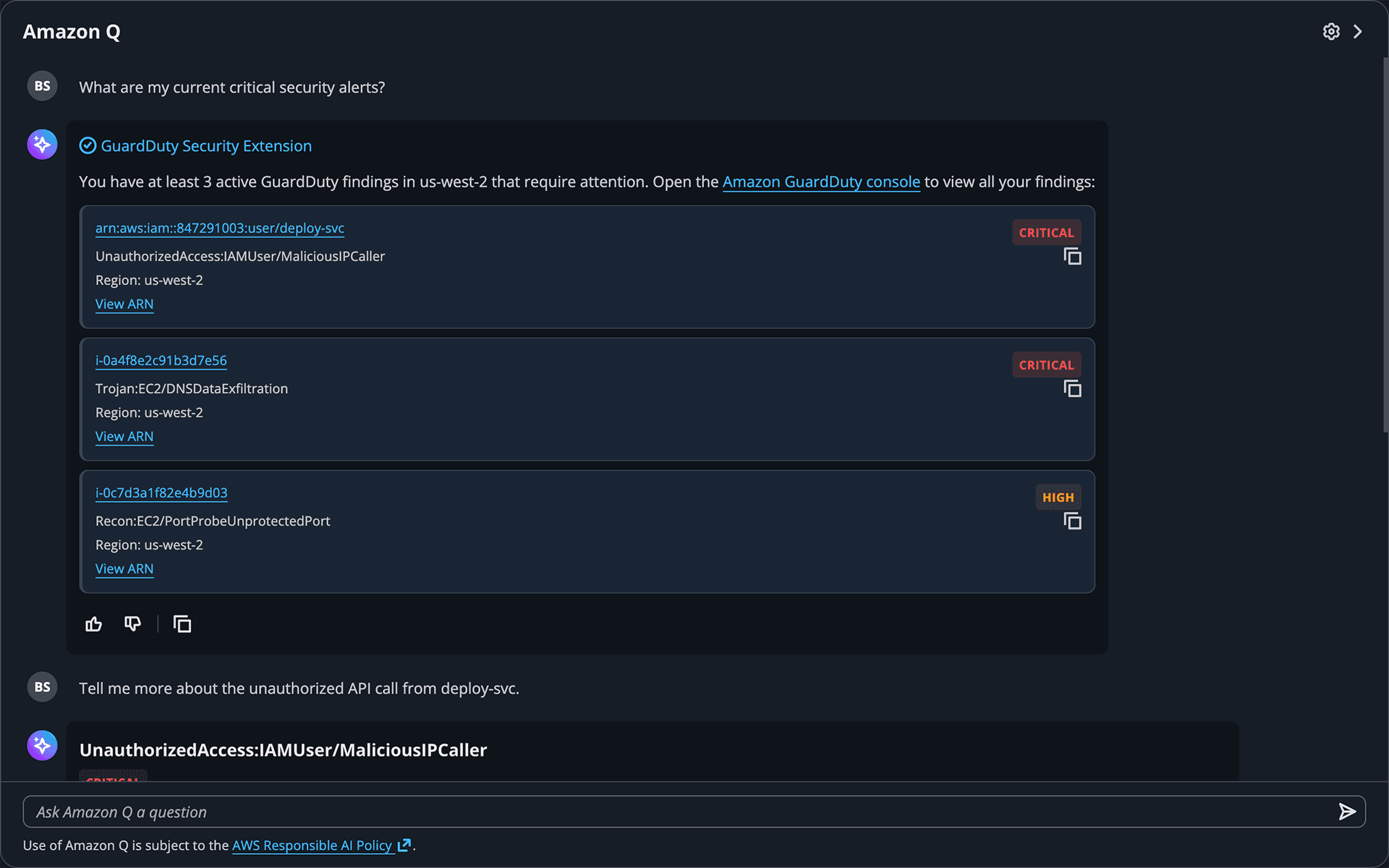

When customers asked Q anything related to security, even straightforward questions about their own environment, the system returned a templated refusal. This was deliberate: Q's underlying model could hallucinate findings, fabricate severity levels, or give incorrect remediation advice. In a security context, a wrong answer isn't just unhelpful, it's dangerous. But the refusal created its own problem. CSAT scores for the Q widget showed a clear pattern: customers who attempted security-related questions reported significantly lower satisfaction. Customer interviews added texture. They weren't confused by the refusal, they were frustrated because Q should have been perfect for exactly these urgent, contextual questions about active threats.

Discovery

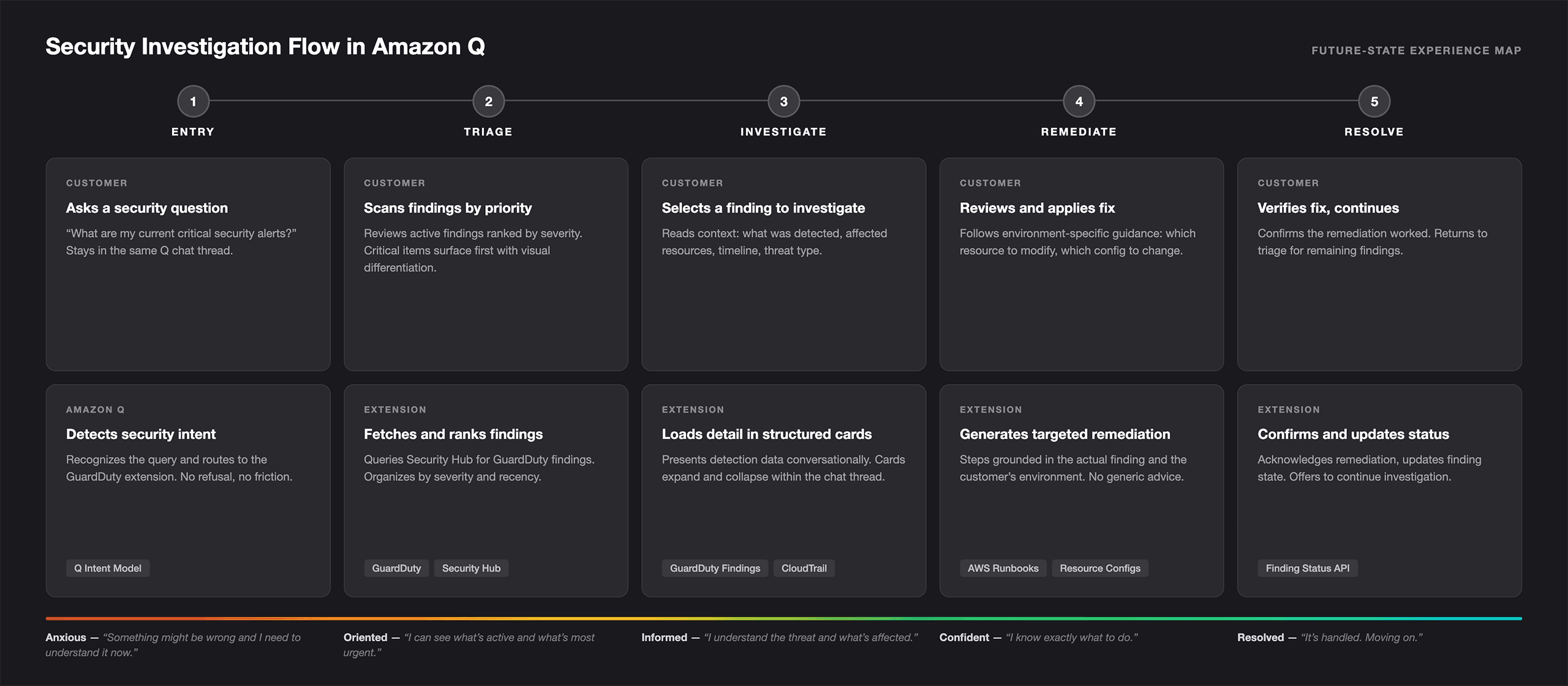

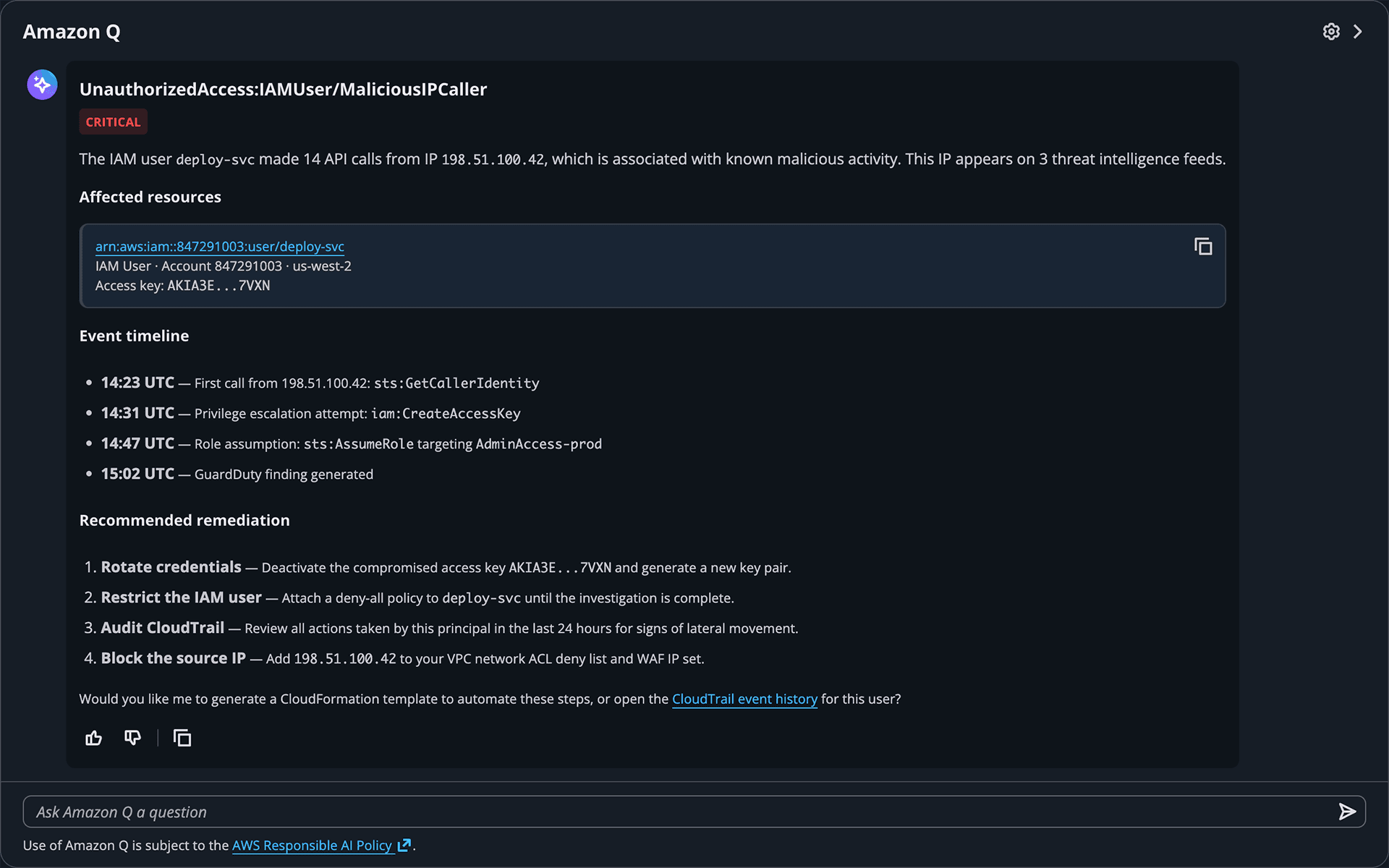

The Q widget was being built in parallel with no documented design patterns, no component library, and no interaction guidelines. I conducted a heuristic review of every Q integration built to that point, cataloging the implicit patterns forming across teams: how they handled multi-step flows within chat, surfaced structured data conversationally, and transitioned from general responses to service-specific deep dives. The review revealed usable patterns I could adopt and gaps the security use case would require new thinking for. The key insight that unlocked the design direction was reframing the problem: this wasn't about making Q smarter about security. It was about giving Q a trusted source to call. If Q could route security questions to a specialized extension backed by actual GuardDuty data (real findings, real severity levels, real remediation guidance) the responses would be grounded in truth rather than generated from patterns.

The Design

Designing Without a Design System

Where It Landed

The design was completed and well received, but ultimately shelved because the API integration between Q and the security extension infrastructure hadn't been built yet. This is a reality of designing at the edge of a platform's capabilities. What's remarkable is how closely the pattern maps to what AWS eventually built: Q now handles security queries through service data querying, Security Hub findings route through AWS Chatbot to Slack and Teams with conversational investigation, and AWS Security Incident Response launched with a dedicated AI agent that gathers evidence and presents correlated findings. The unified in-console experience remains the direction these capabilities are converging toward. The concept anticipated where the platform needed to go.